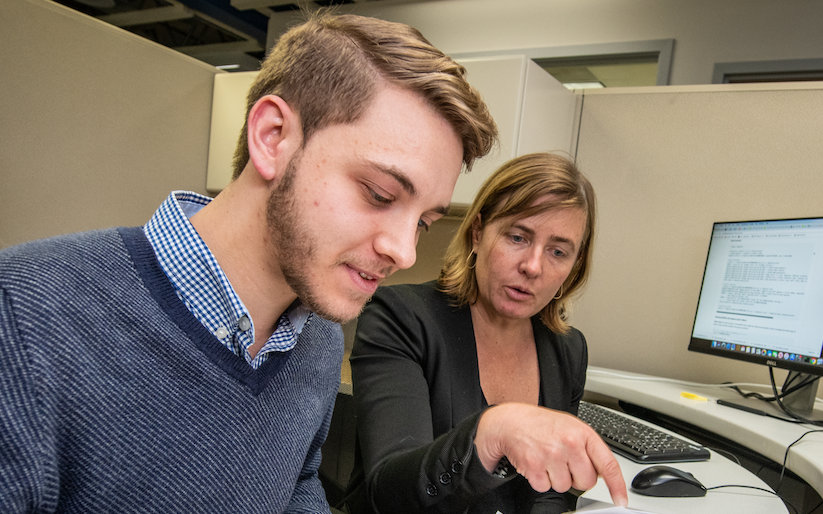

Lucy Linder, who is pursuing a doctorate at the College of Engineering and Architecture of Fribourg in Switzerland, grew up in the shadow of nearby CERN, the largest high-energy physics laboratory in the world, but during her youth she didn’t pay much attention to the science taking place there. Her academic pursuits, though, would steer her on a circuitous path that brought her close to home – and to the wide world of particle physics research at CERN.

“I was born 20 minutes from CERN but I didn’t know what was going on there,” said Linder, who has a bachelor’s degree in philosophy and is now pursuing a doctorate in computer science. “I had to fly to the U.S. to learn that.”

In September 2018 Linder joined Berkeley Lab for a five-month stint to explore the application of quantum computing for data analysis at CERN as the focus of her master’s thesis. It was a challenge that brought her outside of her academic comfort zone.

Particle Physics Turns to Quantum Computing for Solutions to Tomorrow’s Big-Data Problems

Click on a name or photo below to learn about research projects in quantum computing by early-career researchers at Berkeley Lab:

“A professor friend had proposed this project. It was so challenging: I knew nothing about particle physics and nothing about quantum computing.” But she was up for the challenge. “I said, ‘Let’s do it.’”

She noted, “I had to read a bit about particle tracking and how the Large Hadron Collider works.” The simulated data she was working with, known as TrackML, contained about 100,000 total particle “hits” or tracked points, and a total of 10,000 simulated particle tracks that are sequences of linked points.

The field of quantum computing is so new that Linder found she was treading in new territory. “There were not so many practical algorithms,” she said.

She was exploring how to adapt the particle-track reconstruction problem for calculations using a type of quantum-computing machine known as a quantum annealer, and she began to design an algorithm suited for quantum annealers built by D-Wave Systems Inc., a Canadian company.

She broke the problem of particle-track reconstruction into two separate steps.

In the first step she converted data corresponding to linked sequences of particles, known as triplets, into binary values, so that the data was simplified as either a one for the simulated real triplets or zero for false triplets.

Also, simulated data for particle energies were analyzed for matches to the expected physical properties of particle trajectories. This reformatting of data, into a QUBO (quadratic unconstrained binary optimization) problem, also lent itself to a possible solution using the quantum annealing technique, Linder noted.

“Basically, I designed an algorithm to map this problem into a QUBO form, which was a big part of the problem and was not easy. The second challenge was to use a D-Wave,” she said, to apply the quantum-annealing technique. “The problem was that the QUBOs we had were really large and wouldn’t fit on a D-Wave, so we needed to find a way to split the QUBO into smaller instances that can run on a D-Wave.”

Linder noted that quantum computers are quickly scaling up with larger numbers of qubits, and the hope is that this ongoing R&D will lead to bigger and better machines that could be better suited to handle the higher volume and complexity of LHC data once the upgrade project is completed.

Linder ultimately ran her algorithm on up to 70 percent of a full particle-tracking event through this process of splitting it into smaller components. The algorithm showed promise, with up to 94 percent precision in correctly identifying particle tracks, she noted.

“Ninety-four is kind of good. You would never get 100 percent; there is too much noise,” she said.

The QUBO work showed particular promise, though it’s not yet clear whether quantum annealing will be a viable candidate for handling the HL-LHC data, she added. Time will tell whether this technology or competing technologies will evolve as superior choices to classical computers in speed and efficiency.

“Currently this is kind of slow but I’m sure it can do way better,” she said.

Click on a name below to learn about other research projects in quantum computing by early-career researchers at Berkeley Lab:

More: